Hi! I am Kaizhen Tan (Chinese name: 谭楷蓁), an incoming Ph.D. student at New York University, currently pursuing my master’s degree in Artificial Intelligence at Carnegie Mellon University. I received my bachelor’s degree in Information Systems from Tongji University.

My research sits at the intersection of Urban Science, Spatial Intelligence, and Embodied AI for Urban Environments. Driven by the vision of harmonizing artificial intelligence with urban ecosystems, I aim to address the knowledge-to-action gap in digital cities: while urban digital systems are increasingly capable of monitoring conditions, modeling urban dynamics, and anticipating risks, they still struggle to support timely, place-based action. My work seeks to build spatially intelligent and socially aware urban AI systems that make cities more adaptive, inclusive, and governable.

My research integrates:

- Paradigms: Robotic Urbanization, Agentic Urban Digital Twins, Human-centered Urban Governance

- Methodologies: Multimodal Learning, Geospatial & Spatiotemporal Data Analysis, Computational Social Science

- Technical Foundations: LLMs, VLMs, AI Agents, World Models, 3D Vision, Wearable Devices

Specifically, my research agenda is organized around four key topics:

🤖 1. Robotic Urbanization & Governance

How can dense cities integrate embodied intelligence while preserving safety, accessibility, and pedestrian experience?

- Urban Readiness for Robots: Measure whether sidewalks, crossings, curbs, buildings, and public facilities can support safe robot operation.

- Human-Robot Coexistence: Study conflicts, comfort, right-of-way, and interaction norms between robots, pedestrians, cyclists, and vulnerable groups.

- Accessibility-Aware Deployment: Design routing and operation strategies that avoid reducing mobility for disabled people, older adults, and children.

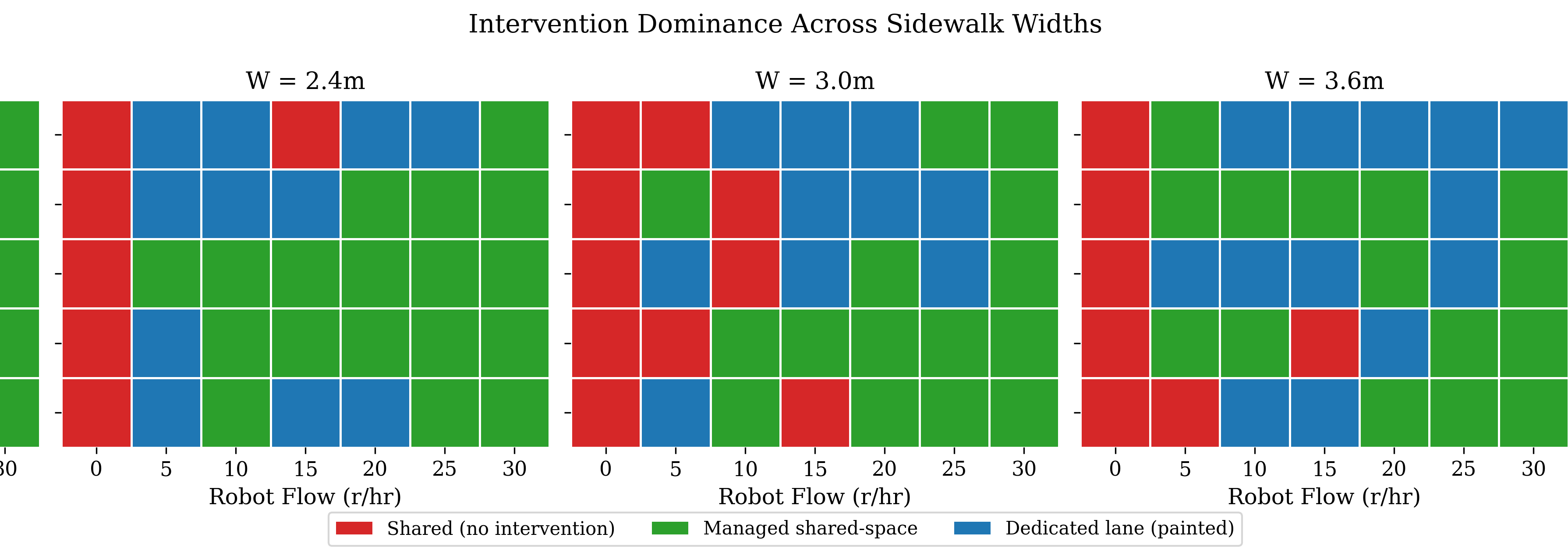

- Curbside and Low-Altitude Governance: Develop spatial rules for delivery robots and drones, including lanes, parking, corridors, privacy, noise, and safety constraints.

- Public Acceptance and Accountability: Model public perception, responsibility boundaries, and governance mechanisms for city-scale deployment.

🏙️ 2. Agentic Urban Digital Twins

How can urban digital twins evolve from static city models into continuously updated systems for sensing, reasoning, and policy support?

- Urban Foundation Representations: Fuse remote sensing, street-view imagery, trajectories, POI, IoT, text, and 3D data into unified urban representations.

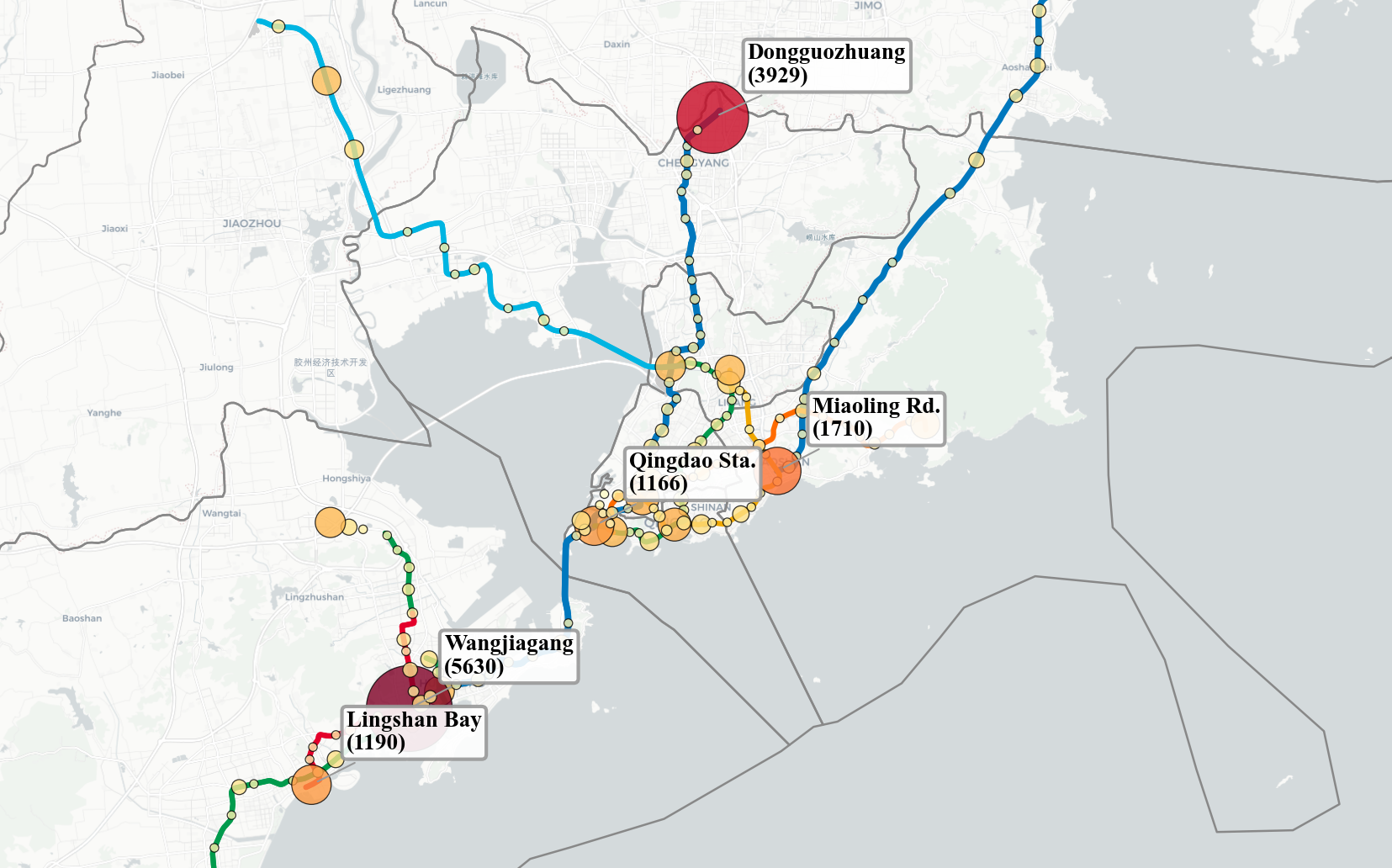

- Continuous Urban Sensing: Use robots, drones, mobile devices, and wearables as emerging data sources to update urban conditions over time.

- 3D City Understanding: Support geo-localization, semantic mapping, and spatial querying across point clouds, meshes, and 3D Gaussians.

- Urban Agents: Build LLM and VLM agents for map reasoning, spatial RAG, policy QA, public service assistance, and planning workflows.

- Policy Sandbox: Enable what-if simulation, risk assessment, and implementation checks for urban management and public policy.

🎨 3. Multimodal Social Sensing

How can multimodal human-centered data reveal urban experience, social needs, and governance priorities?

- AI-Enhanced Geospatial Analysis: Link urban form, environment, mobility, and public services with human behavior and social outcomes.

- Pedestrian Experience and Accessibility: Detect walking barriers, sidewalk quality, perceived safety, and mobility challenges in everyday urban environments.

- Urban Perception and Visual Aesthetics: Quantify streetscape quality, neighborhood imagery, and place identity to support design and regeneration decisions.

- Socio-Cultural Signals: Extract place-based narratives from text, images, and online platforms to understand local identity and public concerns.

- Participatory Governance: Translate social sensing results into explainable tools for planners, communities, and decision-makers.

🚀 4. Spatial Intelligence & World Models

How can spatial intelligence provide reliable reasoning, memory, and simulation capabilities for urban AI systems?

- Embodied Spatial Representations: Unify geometry, semantics, physics, affordance, and action for robots, agents, and urban digital twins.

- Urban World Models: Learn predictive models of how urban spaces change and how agents interact with physical and social environments.

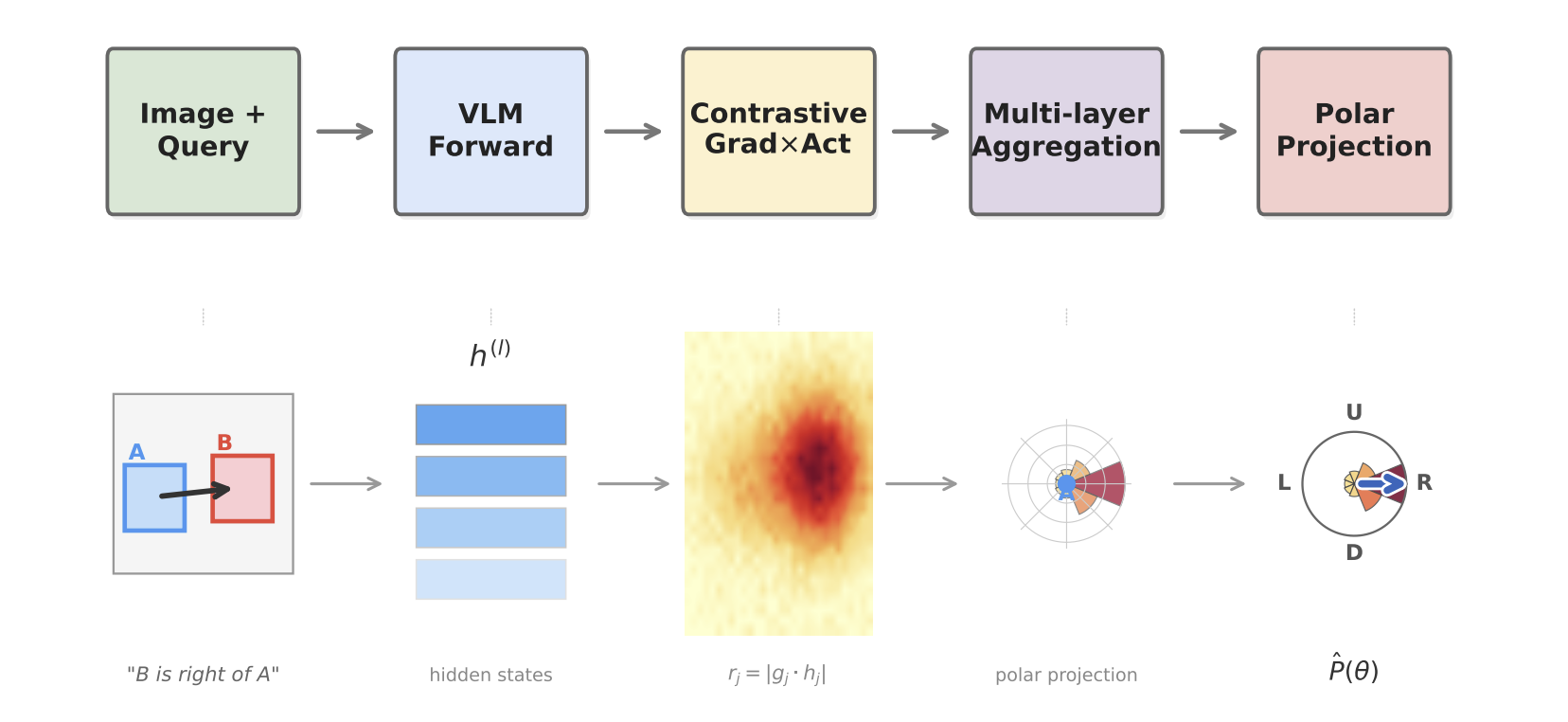

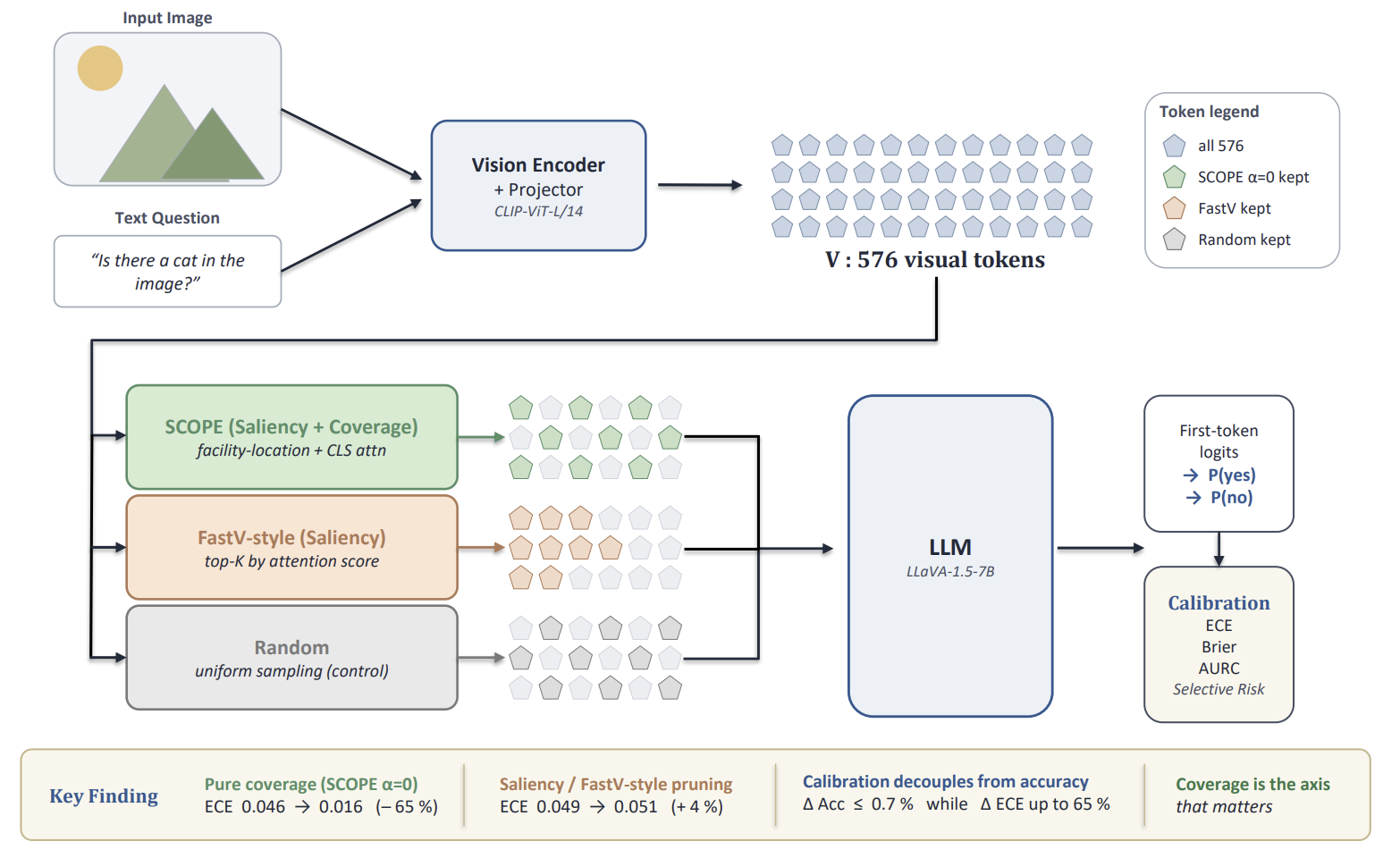

- Spatial Reasoning with VLMs: Improve map understanding, 3D reasoning, scene interpretation, and location-aware decision-making.

- Lifelong Updating and Memory: Develop mechanisms for continuous learning, forgetting control, uncertainty tracking, and safe model updates.

- Interpretable and Robust Decision Support: Make spatial AI systems transparent enough for planning, governance, and real-world deployment.

🔥 News

- 2026.03: 🎓 I am pleased to share that I will begin my PhD at New York University in Fall 2026 under the supervision of Prof. Chenghe Guan and Prof. Zhan Guo.

- 2026.01: 🎉 The abstract co-authored with Prof. Fan Zhang has been accepted for the XXV ISPRS Congress 2026. See you in Toronto!

- 2025.12: 🎉 Our paper, led by my senior labmate Dr. Weihua Huan and co-authored with Prof. Wei Huang at Tongji University, was accepted by GIScience & Remote Sensing; honored to contribute as second author and big congratulations to Dr. Huan!

- 2025.10: 🔭 Joined Prof. Yu Liu and Prof. Fan Zhang’s team at Peking University as a remote research assistant.

- 2025.08: 🎉 Delivered an oral presentation at Hong Kong Polytechnic University after our paper was accepted to the Global Smart Cities Summit cum The 4th International Conference on Urban Informatics (GSCS & ICUI 2025).

- 2025.07: 🎉 My undergraduate thesis was accepted by 7th Asia Conference on Machine Learning and Computing (ACMLC 2025).

- 2025.06: 🎓 Graduated from Tongji University—grateful for the journey and excited to continue my studies at CMU.

- 2025.04: 🔭 Completed the SITP project under the supervision of Prof. Yujia Zhai in the College of Architecture and Urban Planning.

- 2025.01: 💼 Joined Shanghai Artificial Intelligence Laboratory as an AI Product Manager Intern.

- 2024.09: 🌏 Conducted research at ASTAR in Singapore under the supervision of Dr. Yicheng Zhang and Dr. Sheng Zhang.

- 2024.04: 🔭 Began my academic journey at Prof. Wei Huang’s lab in the College of Surveying and Geo-Informatics, Tongji University.

📖 Education

💼 Experience

🔭 Research

- 2026.04 - Present, Research Assistant, Shanghai Key Laboratory of Urban Design and Urban Science (LOUD), NYU Shanghai, China

- 2025.10 - 2026.03, Research Assistant, Spatio-Temporal Social Sensing Lab (S3-Lab), Peking University, China

- 2024.09 - 2024.12, Research Officer Intern, A*STAR Institute for Infocomm Research, Singapore

- 2024.04 - 2025.04, Research Assistant, College of Architecture and Urban Planning, Tongji University, China

- 2024.04 - 2024.12, Research Assistant, College of Surveying and Geo-Informatics, Tongji University, China

💻 Industry

- 2025.01 - 2025.04, AI Product Manager, Shanghai Artificial Intelligence Laboratory, China.

- 2023.01 - 2023.02, Data Analyst, Shanghai Qiantan Emerging Industry Research Institute, China

📝 Selected Papers

Peer-Reviewed

Preprints

🔬 Projects

💬 Presentations

-

2026.07 - XXV ISPRS Congress 2026

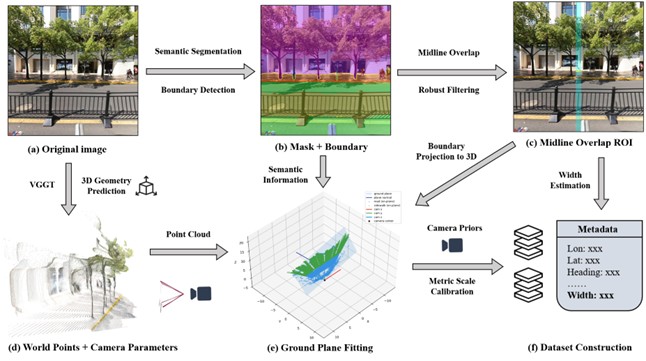

UrbanVGGT: Scalable Sidewalk Width Estimation from Street View Images

Toronto, Canada -

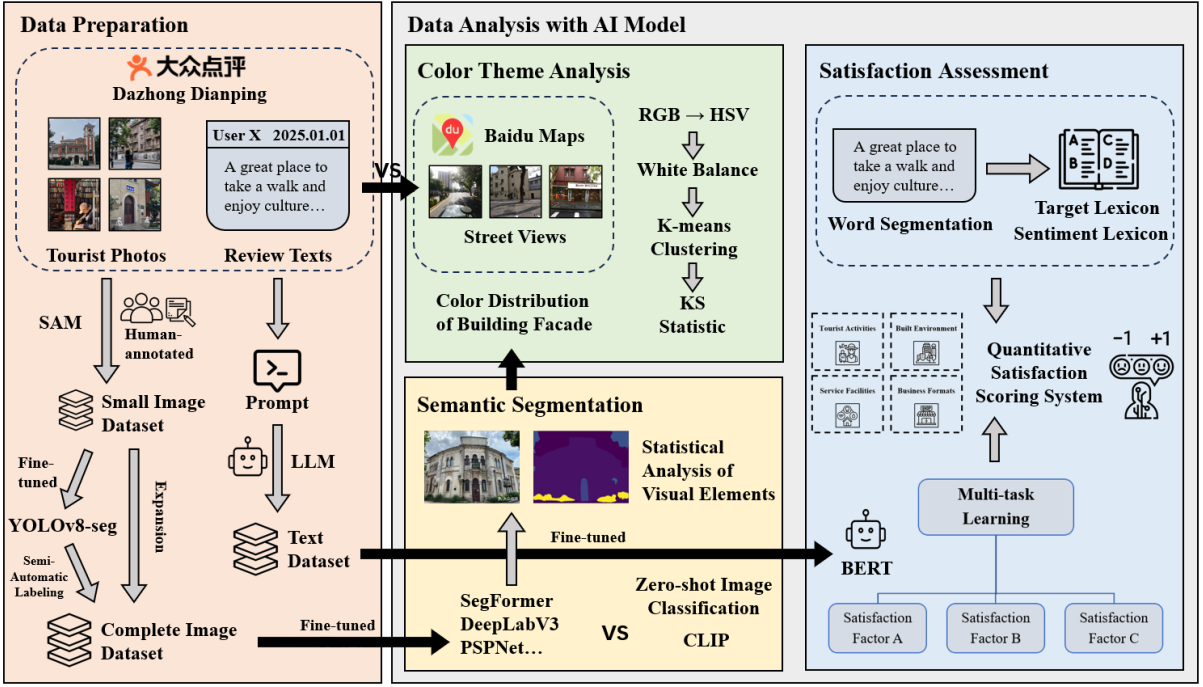

2025.08 - Global Smart Cities Summit cum The 4th International Conference on Urban Informatics (GSCS & ICUI 2025)

A Multidimensional AI-powered Framework for Analyzing Tourist Perception in Historic Urban Quarters: A Case Study in Shanghai

Hong Kong Polytechnic University (PolyU), Hong Kong SAR, China -

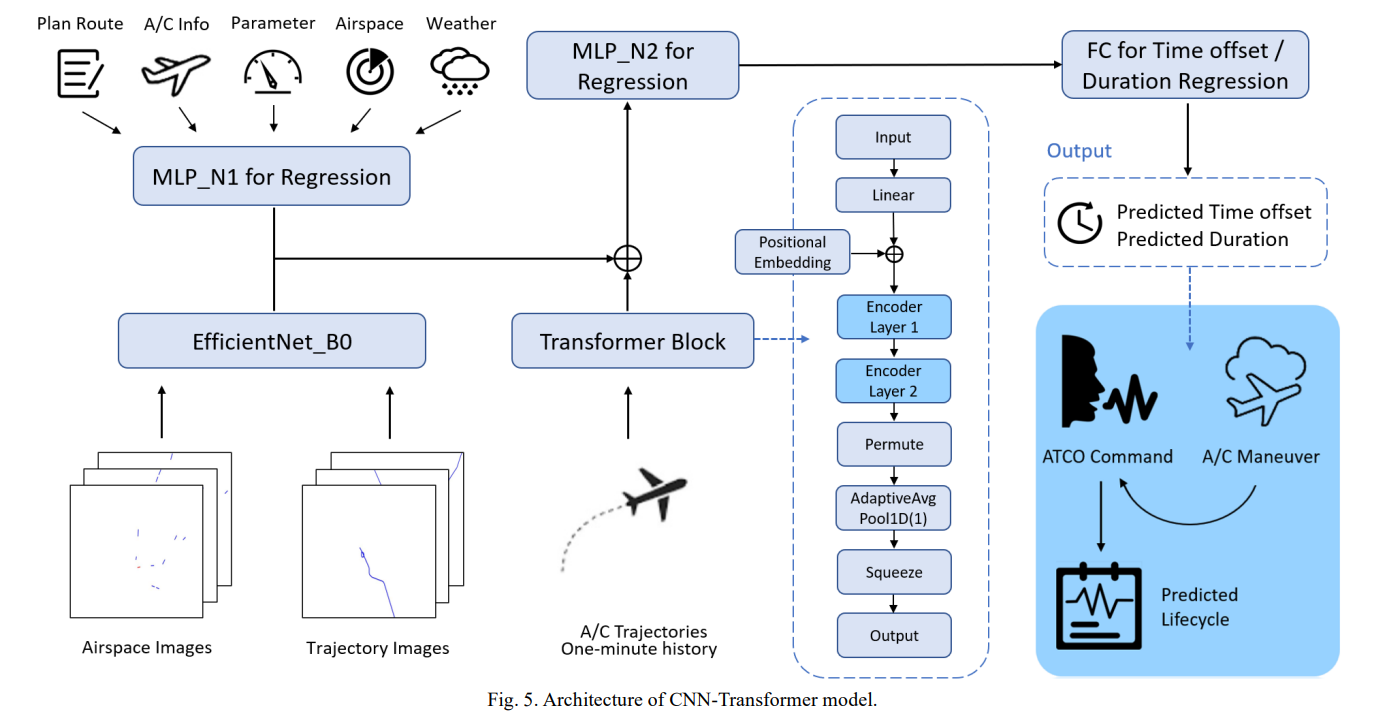

2025.07 - 7th Asia Conference on Machine Learning and Computing (ACMLC 2025)

Multimodal Deep Learning for Modeling Air Traffic Controllers Command Lifecycle and Workload Prediction in Terminal Airspace

Hong Kong SAR, China

📫 Contact

- Email(CMU): kaizhent@cmu.edu

- Email(NYU): kt3275@nyu.edu

- Email(personal): wflps20140311@gmail.com

Please feel free to reach out if any of these research directions resonate with you. I'd be happy to chat!